http://www.seobook.com/corporate-seo-services

http://www.ariozick.com/how-google-wants-to-destroy-small-business-online/

http://searchengineland.com/enterprise-seo-a-plumbing-problem-29237

link profile seen as a whole

http://www.bing.com/community/blogs/webmaster/archive/2009/06/19/links-t...

http://googlewebmastercentral.blogspot.com/2009/10/dealing-with-low-qual...

http://www.wordtracker.com/academy/brent-payne-interview

http://www.stonetemple.com/articles/interview-brent-payne.shtml

http://www.foliomag.com/video/new-york-times-chief-search-strategist-mar...

corporate seo is largely about trimming away the fats and leveraging the assets you already have. and perhaps limited link buying. ;)

The corporate SEO faces a number of challenges, many of which are to do with procedure and diplomacy. We'll take a look at these challenges and how to handle them. We'll also look at the specific technical aspects of SEO on corporate sites, and the strategic advantages particular to corporate sites.

Big Obstacles, Big Opportunities

The biggest obstacles in corporate SEO are political.

Corporate sites usually have a team of people working on them. There are a number of stakeholders. These stakeholders consist of managers, related divisions, designers, developers, content producers and writers. There will often be people who will be openly hostile to a change in the way they work. Many of these people may be unfamiliar with search engines and their requirements.

Into this environment walks the SEO.

No matter what, you're going to ruffle a few feathers! How do you deal with the myriad of demands and internal politics?

Get Management Buy In

The first step to achieving good SEO outcomes within an organizational structure is to get management buy-in. Think of internal managers as customers.

Given that management have probably already hired you, getting their buy in should be relatively straightforward. Management will want to see facts, figures and strategies that support the business case. Prepare presentations that demonstrate your proposed strategy, how it supports the business case, how long it will take to achieve, and what your measures of success will be.

What type of facts and figures will they want to see?

1. Show the benefit you provide above the cost of hiring you

If they've hired a full-time inhouse SEO, it's most likely they've already done this calculation, but it doesn't hurt to reinforce it.

For example, let's say they're paying you 50K per year. Overhead for employees is likely 50% of the wage figure again. Can you come up with a business case that shows how you'll provide more than $75K in value per year? You don't need to state this figure explicitly, merely place a ballpark value on your strategy.

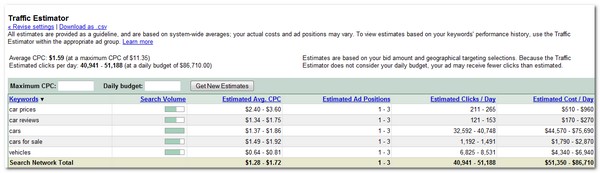

Value can be difficult to assess, but you can look at what they're spending on PPC and compare. If they're not spending on PPC, examine the keyword bid prices in tools such as the Google Adwords Pricing calculator. Estimate the value of the keyword terms and traffic you're likely to receive for your SEO campaign.

Try to find out the business plan. What are the companies objectives? What are the objectives of the division? How are they measured? Businesses often have KPIs - which stands for Key Performance Indicators. Find out what these are, and fit your strategy to these metrics.

The very fact you're asking for these details will likely impress those who have hired you.

2. Show Where The Site Is Now, And Where They Can Be With Good SEO

Demonstrate the position they occupy now, and show where you can get them to *if* your strategy is followed. Prepare charts of current rankings, traffic levels, conversion rates, and overall market trends.

Here's an example business case template you can follow:

- Background - why SEO is useful

- The costs of SEO - the cost to the organization of not doing SEO

- The benefits to the company of SEO - focus on the business benefits

- Why the organization needs SEO - show competitive advantage potential, decreased advertising costs, increased exposure etc

- General Principles of SEO - stay broad and high level, avoid technical minutiae

- Recommended scope and objectives of your SEO strategy

- Risks - outline the conditions that will prevent you from executing your strategy

- Cost of your SEO strategy - include any external costs, such as directory submissions, paid placement etc

- Projected cost/benefit analysis for the organization - compare with other advertising channels, such as PPC

- Measurement, outcomes, milestones and evaluation - set your KPIs

- Anticipated overall results- also include a timeframe

What pushes managements buttons? Is it traffic numbers? Is it seeing the company top of the search results? Is it increased sales? It might be a combination of these things. Nail - in writing - what it is they really want to see delivered, then figure out how to deliver it.

3. Show Them What Their Competitors Are Doing

Is there a competitor who is doing well with their SEO? Prepare facts and figures that show where your company is being outgunned. There is nothing managers, particularly marketing managers, like less than being outgunned by their competitors. If the competitors are using good SEO strategy, you can use this as justification for your strategy.

One objection you may hear is that the company is already running PPC. So why do they need SEO? Impress upon them that most people click on the main search results. SEO clicks are "free", especially over the long term.

Also, a study by IProspect showed that top search results can result in brand equity for the highly ranked sites:

Finally, it continues to be apparent that brand equity is conveyed upon companies whose

digital assets appear among the top search results by roughly a third of the search

engine users. In 2008, 39% of search engine users believe that the companies whose

websites are returned among the top search results are the leaders in their field. This

figure has grown from 36% in 2006, and 33% in 2002

Once your strategy is agreed to, you should have the backup you'll need to undertake the hard part.

Convincing The Minions

Various people within the web team need to buy into SEO in order for it to work.

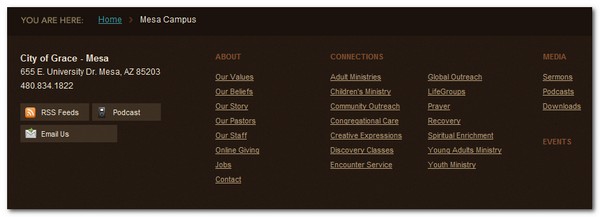

Some companies locate their web team in their IT division, others place their web team in their marketing division. Often, these two business units share ownership of the strategy. It is important to determine which division has the most control, especially over aspects such as site structure, content production, and overall strategy. Get buy-in from the appropriate management team.

Look to establish rapport with, and train, the various people who occupy the following roles.

1. The Manager/Team Leader

You must have buy-in from the person with the most control over the business unit responsible for web strategy.

Managers tend to respond well to anything that helps them achieve departmental goals.Look for areas synergy exists. For example, marketing managers often have traffic goals, and similar visitor metric milestones. Show them how SEO will help meet those objectives.

This is why it is important to frame SEO in business terms, rather than purely a technical process.

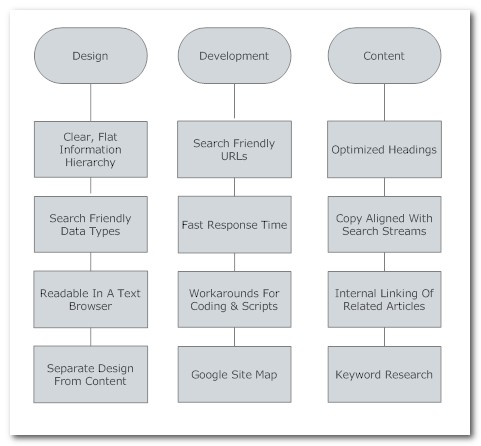

2. The Designer

The designers are responsible for the look and feel of the site. They are probably also be responsible for site architecture. Architecture and design are two areas where you are likely to experience a lot of push-back.

There is good reason for this.

What is good for SEO may not be good for users or brand aesthetics. This area needs to be carefully balanced. If the designers think the SEO is compromising the look, feel and operation of the site, then you're not going to get very far, no matter how good your intentions are.

If your designers are familiar with usability, and good designers will be, you're in luck. There are a lot of usability integration points that work for users, designers and SEOs. For example, breadcrumb navigation can be great for usability and SEO, as it allows for the propagation of keywords, and provides strong internal link structure.

Are their disability access laws that the company must comply with? Depending on your legal jurisdiction, there may be disability discrimination laws in regards to access, and these can apply to websites. For example, Target were the subject of a legal case brought about by the National Federation Of The Blind.

The lawsuit alleged that Target had not made the minimum changes necessary to its Web site to make the site compatible with screen access technology and to allow blind users to access the site to purchase products, redeem gift cards, find Target stores, and perform other functions available to sighted customers.

In order to comply, sites need to provide equal access for those with impairments. If a person is visually impaired, then compliance may mean that the site must be able to be read by a text-to-speech converter in order to be accessible. Of course, any site that can be used and navigated with a text browser will also be search engine friendly. This can be a good angle to use if the law in your jurisdiction supports it, and you are otherwise having problems convincing the designers - and managers - to make a site more search engine friendly.

Also be on the lookout for other areas that require little change and provide natural synergies.

These areas include:

3. Writers & Content Producers

The writers provide the words. The content producers may provide video, pictures, and other media. You'll probably be dealing mostly with the writers.

Writers, especially if they have been writing professionally for a long time, can be very set in their ways. Writers schooled in journalistic or copy writing techniques use methods that predate internet search engines, and often the internet itself. Old habits die hard.

The problem with such writing is that it may not incorporate keyword terms in the right places - particularly headings - or in the frequency you require. Communicating this concept can be difficult, especially with journalists, who like things presented in terms they can understand, usually within a sentence or two.

Avoid terminology. Talk in their terms, not yours. Look for similar concepts and use the journalists terminology to describe them. For example, both journalists and SEOs know the power of headlines. Go for clarity and be descriptive, as opposed to being generic. Both write in an inverted pyramid, top-down style i.e. the most important facts - and keywords - are likely to appear at the top of the article. Both quote sources i.e. an opportunity for keywords within a link. And so on.

Align their goals with yours. Show writers how much potential traffic there is out there and how keyword research can be used to suggest article topics and title ideas. Show them that by following a few SEO principles, they can get more readers reading their articles. Writers often have communications objectives i.e. to achieve wider reach and exposure, so there might be some obvious, natural synergies to be had. All writers have egos, and like to have their articles widely read.

Check out this tactic, used by Rudy De La Garza Jr at BankRate Inc to help convince writers to adopt SEO practices:

At Bankrate, Mr. De La Garza showed editorial employees that, for some articles, deciding on about 10 main keywords before writing could help increase their number of page views. Writers were already vying for bragging rights to the most popular articles. He told them: "You know what, guys? If we apply a few SEO tactics here, I can help you win the weekly battle," he says.

Writers need to research topics. I've often found writers to be very receptive to SEO data mining techniques i.e. the frequency of keyword searches. Show them how keyword research can be a good way to research topics for articles. They can ensure they are writing on popular themes, or can twist their copy a little in order to tap into search streams.

4. The Developer

The developers are responsible for the technical aspects of the website.

Developers are often located in IT, yet you rely on them to perform a marketing function. Developers tend to work on specific projects. This can cause a conflict with the SEO, whos job is very much a work in progress.

Try to embed SEO into the development process. Developers usually work to a brief or requirements document, so include SEO where appropriate. Look for any design specifications that will affect SEO and get these sorted out before the developer starts coding.

One area that is likely to present problems is the structure of URLs. A developer doesn't care if the URL is long and unwieldy. It's probably never been cited as a problem before. Ensure the document specifies a URL structure and site hierarchy that gels with SEO i.e. descriptive, unique file names and a clear, flat directory structure. If the site has already been built, look into rewriting existing URLs.

Some of the marketing advantages include:

- The URLs look nicer and will likely get clicked on more often

-

- The URLs will provide better anchor text if people use the URLs as the link anchor text

- If you later change CMS programs having core clean URLs associated with content make it easier to mesh that content with the new CMS

- The benefit Google espouses for dynamic URLs (Googlebot being able to stab more random search attempts into a search box) is only beneficial if your site structure is poor and/or you have way more pagerank than content (like a wikipedia or techcrunch)

Developers will be aware of the need for site response speed. They need to ensure the site is crawlable. This job has been made somewhat easier, of late, given the introduction of Google Site Maps.

There might be various coding practices that can be changed in order to enhance SEO. For example, try replacing JavaScript behaviors, particularly for menus, with CSS techniques. Are there other coding aspects that could be enhanced? It might provide an opportunity for the developer to train in new technologies. I've yet to meet a developer who didn't want to learn new ways of coding. It all adds to their CV.

5. Legal

In big companies, copy is usually run past legal before any changes are made. Lawyers, as a profession, are typically risk adverse. This can play havoc with SEO strategies, especially edgy, link baiting SEO designed to attract links!

The only way to deal with this is to look for clear guidelines from legal in advance of implementing content strategies. Legal will almost certainly take precedence over SEO as companies look to protect their downside risk. On the bright side, the content of a page - especially if one or two words are changed - isn't going to make or break an SEO strategy.

SEO Best Practices For Corporates

In any change process, there are a lot of retraining that needs to be done. SEO is no exception.

The more people who understand what you do, and how and why you're doing it, the easier your job will be. There is no one way of achieving this, other than to communicate as often as possible. Look at training others as being a big part of your job, and something that should be done on an ongoing basis.

Once you've got people onside, you need to start building procedures into the work-flow itself.

Get a copy of the web site life-cycle and all documents relating to procedure, process and specification. Amend all documents to include SEO requirements into the process. Highlight all areas that present a risk, and make notes about the consequences of not mitigating these risks. With any amendment to process, there will likely be meetings in which you'll need to justify these chances, so come prepared.

An example of a change of process might be:

When publishing new articles, writers should search for existing articles, and link to them in the related articles section

Look for ways that will make your changes easy to incorporate. For the example above, have the designers build a "Related Articles" section into the template, so the requirement of internal linking becomes a natural part of the article creation process.

Here are some broad requirements, listed beneath each job function:

Wider Strategy

Big, corporate sites have advantages that small sites do not. Let's look at a few aspects, and how you can leverage them.

Brand Awareness

Corporate sites often have established brand awareness.

Lets consider Coca-Cola.com. Internal politics aside, getting more search exposure for such a ubiquitous brand, with a PR8, would be a cakewalk. It would simply be a case of ensuring the site is crawlable, the directory structure was well organized, and that keyword rich content was added on a regular basis.

However, if we take a close look at the CocaCola site, we can see that if they use SEO at all, it is most likely losing out to internal politics. If we do a site query site:www.coca-cola.com, we can see that they don't have many pages indexed. Around 350, most of which are regional versions of the site.The title tagging is poor. There is a lot of uncrawlable flash, and the site architecture isn't conducive to SEO. Does any of this matter? Probably not. Coke will sell a lot of soft drink regardless. However, they are throwing away a cheap win in terms of internet marketing by not being more search focused. It would be a major failure for any corporate site that sells direct to the consumer, like an online retailer.

These well-known sites need to mainly focus on internal factors. The external factors are well established.

Some big brands don't have great linking. Perhaps no one has ever considered external linking to be important. Getting links for well known sites is relatively easy. Ask suppliers and customers for links. News media, particularly trade media and business media, will likely be interested in news releases from your company. If you have a PR division, make sure they are using optimized PR templates that include links back to your site. Leverage the extensive network of relationships that corporates usually have.

Make use of sales. Sales people typically have contacts throughout the industry, and these contacts can be useful when it comes to linking. Think of things you can give the customer, in order to help the sales people make a sale, or deepen the existing relationship they have with them. For example, can you profile customers on your site? A customer may welcome a case study that shows them in a good light, and they'll almost certainly link to it.

Get Offline Data Online

Big companies usually have a wealth of data stored on internal networks. Try to get as much of this as possible onto the web site. Obviously, data that is commercially sensitive can't be made public, but there is likely a lot of material that can be marked up and published.

Marketing departments often don't consider such data because they're thinking of the web site as a brochure. However, the more content you have, especially if your site is well linked, the more chances you'll get search engine visitors. If this type of content doesn't support the brand objectives, place it an area of the site that isn't visited by people who don't arrive via search engines. Perhaps create a general information section.

Sponsorship

Corporates often sponsor events. Make sure the organizations your company sponsors link back to you. Have your PR people write up search friendly press releases and leverage these events for all they're worth.

Further Reading