What is SEM Rush?

A sweet new competitive research tool by the name SEMRush has hit the market. It can be seen as a deeper extension of the SEO Digger project (adding PPC data and tracking AdWords keywords), and a competitor to services like Compete.com and SpyFu (which recently launched SpyFu Kombat).

Brief Tool Overview

Competitive research tools can help you find a baseline for what to do & where to enter a market. Before spending a dime on SEO (or even buying a domain name for a project), it is always worth putting in the time to get a quick lay of the land & learn from your existing competitors.

- Seeing which keywords are most valuable can help you figure out which areas to invest the most in.

- Seeing where existing competitors are strong can help you find strategies worth emulating. While researching their performance, it may help you find new pockets of opportunities & keyword themes which didn't show up in your initial keyword research.

- Seeing where competitors are weak can help you build a strategy to differentiate your approach.

Enter a competing URL in the above search box & you will quickly see where your competitors are succeeding, where they are failing & get insights on how to beat them. SEMrush offers:

- granular data across the global Bing & Google databases, along with over 2-dozen regional localized country-specific Google databases (Argentina, Australia, Belgium, Brazil, Canada, Denmark, Finland, France, Germany, Hungary, Japan, Hong Kong, India, Ireland, Israel, Italy, Mexico, Netherlands, Norway, Poland, Russia, Singapore, Spain, Sweden, Switzerland, Turkey, United Kingdom, United States)

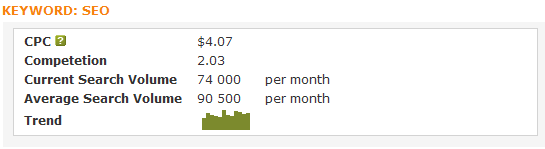

- search volume & ad bid price estimates by keyword (which, when combined, function as an estimate of keyword value) for over 120,000,000 words

- keyword data by site or by page across 74,000,000 domain names

- the ability to look up related keywords

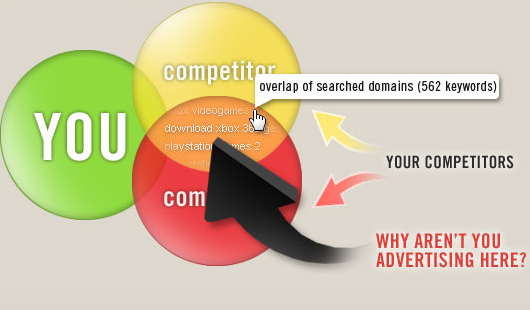

- the ability to directly compare domains against one another to see relative strength

- the ability to compare organic search results versus paid search ads to leverage data from one source into the other channel

- the ability to look up sites which have a similar ranking footprint as an existing competitor to uncover new areas & opportunities

- historical performance data, which can be helpful in determining if the site has had manual penalties or algorithmic ranking filters applied against it

- a broad array of new features like tracking video ads, display ads, PLAs, backlinks, etc.

While their tool is a paid service, the above search box still allows you to get a great sampling of their data for free. SEMrush is easily our favorite competitive research tool. We like their tool so much we also license their data to offer our paying subscribers a competitive research tool powered by their database.

In-Depth Review

SEM Rush vs Compete.com

The big value add that SEM Rush has over a tool like Compete.com is that SEM Rush adds cost per click estimates (scraped from Google's Traffic Estimator tool) and estimated traffic volumes (from the Google AdWords keyword tool) near each keyword. Thus, rather than showing the traffic distribution to each site, this tool can list keyword value distribution for the sites (keyword value * estimated traffic).

Normalizing Data

Using these estimates does not provide results that are as accurate as Compete.com's data licensing strategy, but if you own a site and know what it earns, you can set up a ratio to normalize the differences (at least to some extent, within the same vertical, for sites of similar size, using a similar business model).

One of our sites that earns about $5,000 a month shows a Google traffic value of close to $20,000 a month.

5,000/20,000 = 1/4 = 0.25

A similar site in the same vertical shows $10,000

$10,000 * 0.25 = $2,500

Disclaimers With Normalizing Data

It is hard to monetize traffic as well as Google does, so in virtually every competitive market your profit per visitor (after expenses) will generally be less than Google. Some reason why..

- In some markets people are losing money to buy marketshare, while in other markets people may overbid just to block out competition.

- Some merchants simply have fatter profit margins and can afford to outbid affiliates.

- It is hard to integrate advertising in your site anywhere near as aggressively as Google does while still creating a site that will be able to gather enough links (and other signals of quality) to take a #1 organic ranking in competitive markets...so by default there will typically be some amount of slippage.

- A site that offers editorial content wrapped in light ads will not convert eyeballs into cash anywhere near as well as a lead generation oriented affiliate site would.

SEM Rush Features

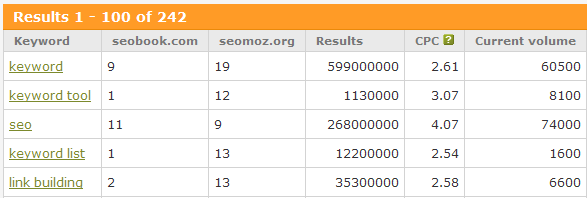

Keyword Values & Volumes

As mentioned above, this data is scraped from the Google Traffic Estimator and the Google Keyword Tool.

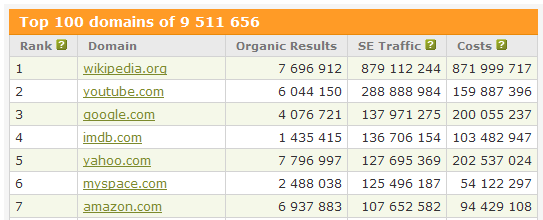

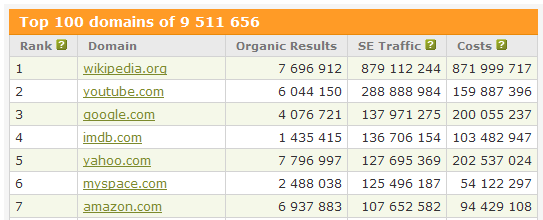

Top Search Traffic Domains

A list of the top 100 domain names that are estimated to be the highest value downstream traffic sources from Google.

You could get a similar list from Compete.com's Referral Analytics by running a downstream report on Google.com, although I think that might also include traffic from some of Google's non-search properties like Reader.

Top Competitors

Here is a list of sites that rank for many of the same keywords that SEO Book ranks for

Overlapping Keywords

Here is a list of a few words where Seo Book and SEOmoz compete in the rankings

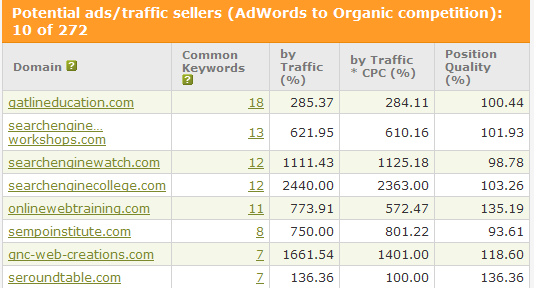

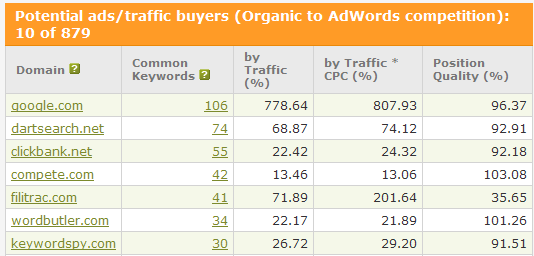

Compare AdWords to Organic Search

These are sites that rank for keywords that SEO Book is buying through AdWords

And these are sites that buy AdWords ads for keywords that this site ranks for

Once Upon a Time...

I was going to create a tool similar to this one about a year ago, until I hired a programmer that was EPIC FAIL. The guy who managed that program is no longer selling programming services - and that makes the world a better place.

I actually had 3 attempts at such a tool. I bought a GREAT domain name, spec'd out the project, then planned on doing it...

- investor backed, who decided to back out

- self funded, but I hired... 1.) a programmer who mid-project decided he needed to make double what I make working part-time, then 2.) the worst programmers ever.

- combination of heavily self funded with the guidance of a bad ass VC, but I backed out due to a need to focus on this site

I spent most of this year focusing on trying to build our community and raise our editorial quality (both goals are going well, but require significant maintenance). We have had 4 strong hires in a row, so it seems like our luck has changed on that front. Recently I started working with a programmer who really clicks with me, often taking my ideas and making them way better than I intended.

If these guys had not made this tool I was going to try to take another run at something like this early next year...which brings up a good point that a friend (and wicked intelligent open source programmer) named François Planque told me. He said all he had to do was think up a good idea but not do it, and within 6 to 12 months if he had not done it, someone else would have already launched it.

Entry cost is so low that a lot of great tools are going to get made in short order, but it is hard to win by sitting on a good idea. ;)