Google Webmaster Central Guide

Google Webmaster Central have just updated their toolset, so now might be a good time to review it.

For those new to Webmaster Central, Google offer a set of very useful tools that provide an insight into how Google handles your site. You can find out how your site is crawled, what keywords you rank for, and if there are any problems.

You can sign up here.

Lets step through the features, and share some ideas on how to best use the data Webmaster Central provides. For those already familiar with Webmaster Tools, it would be great if you could offer newer users some of your tricks and tips in the comments.

Check Out Our New Look

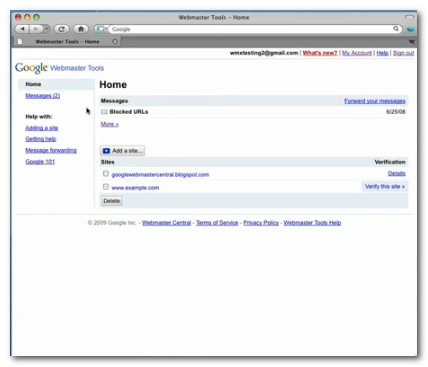

Once you've signed up, you'll be greeted with a dashboard screen. This is where you list all your sites.

Not a major change, but a nice, clean layout that makes it easier to navigate. The dashboard gives you a quick overview of top search queries, crawl errors, and links to your site.

A nice feature is that you can have data forwarded to your email address.

Google outlines the changes here.

The Toolset

Google have provided a useful toolset to help diagnose site problems, and give you insights into how to perform better in Google's index.

Site Maps

Webmasters should submit a Google Sitemap to ensure their site is crawled.

Whilst not necessary, as Google should be able to crawl your site via links, it's a good idea to have the sitemap in case there are problems during the regular crawl. To create a Google sitemap, you can use Google's generator script. There are also various third party tools to help you do this.

Also see "Subscriber Stats" below.

Crawler Access

You can use this tool to test your robots.txt file. Check out this article about why you might use a Robots.txt. You can also use this tool to generate a robots.txt file, based on drop down options.

You can also use this tool to remove a URL from Google. Careful with that one ;)

Site Links

You can specify which site links you want to show. Sitelinks are the indented links below a listing in the search engine result pages.

Sitelinks look like this:

It's a good idea to specify pages that users will find most useful, and that display a consistent theme for your site. You can also block irrelevant pages from appearing in the site links using this tool.

Settings

If your site targets a specific country, you can specify this information. This is very useful if you have a .com, but target visitors in a country outside the US. The tool makes no difference if you already have a country specific TLD, however.

The preferred domain setting enables you to specify which URL you want crawled, the non-www version, or the www version.

Why would you do this?

www.acme.com and acme.com can be seen by crawlers as being two different domains. This can sometimes lead to duplicate content issues. So it's a good idea to pick one or the other, and specify it in Google tools.

The crawl rate setting allows you to slow down the search spiders crawl rate if you're having problems with the spider gobbling to many of your server resources. By default, Google sets the crawl rate for all sites.

Top Search Queries

Find out which keywords you rank for, which keywords send you the most traffic, and which results users clicked through on. If you rank for a lot of keyword terms, but aren't getting much click through, you might want to review the wording of your title tags.

You can also sort this data by device, such as mobile. You can find out which Google country domains send you the most traffic, as well as sorting by image search, and you can specify a date range.

Links To Your Site

You can find out which sites are linking to you, and what anchor text they're using. You can also download this data in the form of spreadsheets.

Keywords

Find out the most common keywords found on your site.

Are these words consistent with the theme of your site? If not, you need to balance out the content by adding more on-topic pages.

Internal Links

Shows which pages are most heavily linked internally, and from where. You can also see if pages are linked from non-www or www "domains". Aim to get internal linking consistent i.e. from either non-www or www but not both, and make sure your money pages are well linked.

Subscriber Stats

Find out how many people subscribe to your RSS feed via Google Reader. You can also submit a feed as a sitemap.

Crawl Errors

This useful diagnostic tool will show you which pages Google is having problems crawling. There is a breakdown by web, mobile, and mobile WML.

Crawl Stats

Use this tool to find out how many pages are crawled per day, and the time spent downloading those pages. This can reveal problems such as slow servers and page load errors.

Crawl stats also give you a PageRank distribution chart. However, like the toolbar, take this data with a grain of salt. I've found it bears little relationship to site ranking, and the real PR of pages is only known by Google.

HTML Suggestions

Use this tool to find duplicate and missing title and descriptions tags.

Tips On How To Use This Data

A recent Google presentation outlined this process for using Webmaster Tools to fix and find broken links. The bigger your site is, the more likely broken links are to crop up. This tool is also very useful if you've recently moved your site, and now have different URLs.

- Download your crawl error sources report from Webmaster Tools

- Fix any "on site" not found links

- Group external not found links by domain and sort

- Evaluate external not found links to prioritize

- Contact external sites to get links fixed

- Set up redirects for links that can't be fixed

- Implement a 404 page

- Make reviewing and resolving not found links a periodic task

- Evaluate how top ranking data changes over time, and look for ways to fix SEO issues if any rankings disappear

Drive More Traffic

Webmaster tools will tell you which keywords you rank for, some of which, you may not have been aware.

Go to the keyword ranking page, and see where you rank for certain terms. Are any of these terms valuable to you? You can estimate the monetary value of keywords by using Google Adwords estimator.

Find the page that is ranking well, and optimize further, either by tweaking the on-page content, boosting that page in your internal linking structure, or increasing the number of external links.

Remove Duplicate Tags & Descriptions

You're unlikely to ranked for pages that Google thinks might be duplicates. Make sure all your pages have unique title and description information. You can use the HTML Suggestions tool to do this. Again, this is a task you should perform periodically.

Enhance 404 Error Pages

Got any page not found errors?

You can make your 404 page a bit more useful by embedding a Google widget that helps your visitors find what they're looking for by providing suggestions based on the incorrect URL. This will hopefully prompt people to search further, rather than click back.

Enhance Clickthrough

The top queries tool features a column that shows you which pages users clicked through on most often.

If you've got a lot of pages ranking, but few click-thrus, you need to tweak your title tag data. Also, load up those search results and see what title tags your competition is using. Someone else is getting those click-thrus, so it's a good lesson to help you zone in on user intent for certain keywords. Keep tweaking until more pages receive click-thrus.

Got any tricks and tips? Add them to the comments.

Comments

Nice summary Peter.

Just that you may want to change this "You can specify which site links you want to show." to "You can specify which site links you DO NOT want to show." for obvious reasons that Google only allows to remove link.

Peter, one thing you can get from the report showing all pages with internal links is a list of pages in the "primary" index.

Google abolished the designation "supplemental index" about 2 years ago, but if a page in your site does not appear in the webmaster central report of pages with internal links, it will show a graybar toolbar page rank and it is essentially supplemental. These pages will rarely rank.

Great tip Jonah :)

Dear sir ,

I had use this tool last year.And now the index of the result is reduce every day.

you can see "site:onvon.com",we don`t know why .and we resubmit the sitemap many times.

ours sitemap is onvon.com/smproducts.xml

could you get me some advices to change this situation.

@raptorzhang

Why do you display recent orders on your site with their details. Doesn't that go against most privacy policies? I'm not sure but it looked like people could get someone's address and what they ordered - that's pretty out-security if that's the case. You might want to fix that.

Hi Aaron,

Regarding the search queries I don't know how reliable Google is when making that list. Do you have any idea which database they use when making the top search queries? I have some great rankings in there we do not reflect on Google when searching.

By the way I use your Rank Checker for that purpose.

I haven't looked at it 100%, but from glancing at it the stats looked fairly accurate to me. Are you seeing a lot missing? A little missing? What sort of sample are you seeing?

Thanks for your reply Aaron.

Actually I see a 5 - 10 position error on my dashboard. If you want I can send you an email with all the detailed info, I don't want to bother you with this, but if you want I can do that. Just to give you an example my Top Search Queries showed me that I am 8 by the "Converse" keyword which I know I am not. I checked my dashboard from different IPs to see if it was any difference, also I search in different Google databases but it seems that I can'y find my 8 position anywhere.

Best Regards.

The algorithms change over time, and I think those show average rank (perhaps average rank ***while you were ranking *** for that keyword) rather than current rank.

Hey Aaron,

I know this is an old post but I'm hoping you can help me out with your thoughts on this one - I recently had a big breakthrough with ranking in the top 3 for a couple of very competitive keywords. I'd like to replicate this on other sites I have in the same niche but for slightly different keywords. My seo practices will be similar to how I got my 1st site ranked. (All whitehat legitimate methods but might have incoming links from same sites, directories, etc.)

My question is - do you recommend I stay away from placing these new sites onto my same Webmaster account? For that matter should I stay away from using the same Google Analytics account?

Any of your experiences or thoughts would help. Thanks.

What real benefit do you gain by connecting your sites together? Any?

Hey Aaron,

There is no benefit to connecting sites together; in fact I'd prefer not to but I figured there is an advantage to submitting my sitemap from all my sites to webmaster tools. Have you seen any benefit to submitting sitemaps? If not, that solves my problem. But if submitting a sitemap is beneficial, how do you avoid submitting sitemaps from multiple sites onto the same webmaster account?

Thanks in advance.

You don't have to submit sitemaps inside of webmaster tools...you can reference a sitemap in your robots.txt file.

Add new comment