Once a training ground for novice SEOs, local search has evolved into a complex, unpredictable ecosystem dominated by Google. Corporations and mom-and-pops shops alike are fighting for their place under the Sun. It's everybody's job to make best out of local Internet marketing because its importance will continue to grow.

This guide is geared towards helping you deepen your understanding of the local search ecosystem, as well as local Internet marketing in general.

I hope that, after you finish reading this guide, you will be able to make sense of local Internet marketing, use it to grow your business or help your clients do the same.

Objectives, Goals & Measurements Are Crucial

Websites exist to accomplish objectives. Regardless of company size, business models and market, your website needs to bring you closer to accomplishing one or more business objectives. These could be:

- Customer Acquisition

- Lead Generation

- Branding

- Lowering sales resistance

- etc.

Although not exciting, this is a crucial step in building a local Internet strategy. It will determine the way you set your goals, largely shape the functionality of your website, guide you in deciding what your budget should be and so on.

Getting Specific With Measurement

Objectives are too broad to work with. They exist on a higher level and are something company executives/leadership need to set.

This is why we need specific goals, KPIs and targets. Without getting into too many details, goals could be defined as specific strategies geared towards accomplishing an objective.

For example, if your objective is to “grow your law firm,” a good goal derived from that would be to “generate client inquiries”. Another one would be to use the website to get client referrals.

When you have all this defined, you need to set KPIs. They are simply metrics that help you understand how are you doing against your objectives. For this imaginary law firm, a good KPI would be the number of potential client leads. After you set targets for your KPIs, you have completed your measurement framework. To learn more about measurement models, you can read this post by Avinash Kaushik.

These will be the numbers that you or your client should care about on a day to day basis.

Lifetime Customer Value And Cost Of Customer Acquisition

Regardless of size, every local business needs to know what is their average lifetime customer value and the cost of customer acquisition.

You need to know these numbers so you can set your marketing budget and be aware if you are on the path of going out of business despite acquiring lots of customers.

Lifetime customer value (LTV) is revenue you expect from a single customer during the lifetime of your business. If you are having trouble calculating this number for your or client's business, use this neat calculator made by Harvard Business School.

Customer Acquisition Cost (CAC) is the amount of money you spent to acquire a single customer. The formula is simple. Divide the sum of total costs of sales, marketing, your overhead, with the number of customers you acquired in any given period.

LTV & CAC are the magic numbers.

You can use them to sell Internet marketing services, as well as to demonstrate the value of investing heavily in Internet marketing.

Understanding and using these metrics will put you and your clients ahead of most competitors.

Stop - It's Budget Time

Now when you have your business objectives, customer acquisition costs and other KPIs defined, and their targets set, it's time to talk budgets. Budgets will determine what kind of local Internet marketing campaign you can run and how far it can essentially go.

Most companies don't have a separate Internet marketing budget. It's usually just a part of their marketing budget which can be anywhere from 2% to 20% of sales depending on a lot of factors including, but not limited to:

- Business objectives

- Company size

- Profit margins

- Industry

- etc.

What does this mean to you?

If you are selling services, you will need to have as much of this data as possible.

Planning And Executing Your Campaign

Now when you know what business objectives your local Internet marketing campaign has to accomplish, your targets, and your budget - you can start developing a campaign. It's easiest to think of this process if we break our campaign planning into small, but meaningful phases:

- laying the groundwork,

- building a website,

- taking care of your data in the local search ecosystem,

- citation building,

- creating a great website,

- building links,

- setting up a review management system,

- expanding on non-organic search channels

- and taking care of web analytics.

Laying The Groundwork

Local search is about data. It's about aggregation and distribution of data across different platforms and technologies. It's also about accuracy and consistency.

Local search is about data. It's about aggregation and distribution of data across different platforms and technologies. It's also about accuracy and consistency.

This is the reason why you need to start with a NAP audit.

NAP stands for name, address and phone number. It's the anchor business data and should remain accurate, consistent and up to date everywhere. In order to make it consistent, you first need to identify inaccurate data.

This is easier than it sounds.

You can use Yext.com or Getlisted.org to easily and quickly check your data accuracy and consistency in the local search eco system.

Start With Data Aggregators

Data aggregators or compilers are companies that build and maintain large databases of business data. In the US, the ones you should keep an eye on are Neustar/Localeze , Infogroup (former InfoUSA) and Axciom.

Why are data aggregators important?

They are upstream data providers. This means that they provide baseline and sometimes enhanced data to search engines (including Google), local and industry directories. If your data is wrong in one of their databases, it will be wrong all over the place.

Usually, your business data goes bad for one or more of these reasons:

- You changed your phone number;

- You moved to another location;

- Used lots of tracking numbers

- Made lots of IYP advertising deals where you wanted to target multiple towns/cities

- etc.

If you or your client have a data inconsistency problem, the fix will start with the aggregators:

Before you embark on a data correction campaign, have in mind that data aggregators take their data seriously. You will need to have access to the phone number on the listing you are trying to claim and verify, an email on the domain of the site associated with the business, and sometimes even scans of official documents.

Remember - after you fix your data inaccuracies with the aggregators, it's still a smart idea to claim and verify listings in major IYPs as data moves slowly from upstream data providers to

numerous local search platforms your business is listed in.

Building Citations Is Important

Simply put, citations are mentions of your business's name, address and phone number (full citation) or name and phone or address (partial citation).

Just like links in “general” organic search, citations are used to determine the relative importance or prominence of your business listing. If Google notices an abundance of consistent citations, it makes them think that your business is legitimate and important and you get rewarded with higher search visibility.

The more citations your business has, the more important it will be in Google's eyes. Oh, there is also a little matter of citation quality as not all citations are created equal. There are also different types of citations besides full and partial.

Depending on the source, citations can come from:

- your website;

- IYPs like YellowPages.com;

- local business directories like Maine.com;

- industry websites like ThomasNet.com;

- event websites like Events.com;

- etc.

We could group citations by how structured they are. This means that a citation on YellowBook.com is structured, but a mention on your uncle's blog is not. Google prefers the first type. The bulk of your citation building will be covered by simply making sure that your data in major data aggregators is accurate and up-to-date. However, there's more to citations than that.

What Makes Citations Strong?

Conventional wisdom tells us that citation strength depends mostly on the algorithmic trust that Google has in the source of the your citation. For example, if you are a manufacturer of industrial coatings, a mention on ThomasNet.com would help you significantly more than a mention on a blog from some guy that has visited your facility once.

You also want your citations to be structured, relevant and to have a link to your website for maximum benefit.

How To Build Citations?

You already started by claiming and verifying your listings with major data aggregators. Since you are very serious about local search, you will make sure to claim and verify listings with major IYPs, too.

Start with the most important ones:

- Yellowpages.com;

- Yelp.com;

- local.yahoo.com;

- SuperPages.com;

- Citysearch.com;

- Insiderpages.com;

- Manta.com;

- Yellowbook.com;

- Yellowbot.com;

- Local.com;

- dexknows.com;

- MerchantCircle.com;

- Hotfrog.com;

- Mojopages.com;

- Foursquare.com;

- etc.

You shouldn't forget business and industry associations such as bbb.org or your local chamber of commerce. Here's where you can find your local chamber of commerce.

Industry Directories Are An Excellent Source Of Citations

Industry directories such as Avvo.com for lawyers or ThomasNet.com for manufacturers are not just an excelent source of citations, but are great for your organic search visibility in the Penguin Apocalipse.

How do you find those ?

You can use a couple of tools:

Want even more citations?

Then pay attention to daily deal and event sites. Don't forget charity websites either. If you are one of those people that are obsessed with how everything about citations works, I recommend this (the one and only) book/guide about citations by Nyagoslav Zhekov.

Make Your Website Great

While it's possible to achieve some success using just Google Places and other platforms to market a local business, it's not possible to capture all the Web has to offer.

Your website is the only web property you will fully control. You have the freedom to track and measure anything you want, and the freedom to use your website to accomplish any business objective.

Marry Keyword And Market Research

There's nothing more tragic nor costly than targeting the wrong keywords and trying to appeal to demographics that don't need your services/products.

To run a successful local Internet marketing campaign, you cannot just rely on quantitative data (keywords), you need to conduct qualitative market research. This is very important as it will reduce your risks, as well as acquisition costs if done right.

Let's start with keyword research.

Getting local keyword data has always been a challenge. Google's recent decision to withhold organic keyword data hasn't made it any easier. However, Google itself has provided us with tools to get relatively reliable keyword data for any local search campaign.

Coupled with data from SEOBook Keyword Tool, Ubersuggest, and Bing's Keyword Tool, you will have plenty of data to work with.

Of course, you shouldn't forsake the market research of the equation.

You and/or your client can survey their customers to discover how exactly they describe your business, your services/products or your geographic area. For example, you'll learn if there are any geographical nuances that you should be aware of, such as:

- DFW (Dallas/Fort Worth)

- PDX (Portland)

- OBX (Outer Banks)

Use this data against keyword research tools. If you're running AdWords, you can get an accurate idea of search volumes. To do that, click the Campaign tab, followed by the Keywords tab, then Details and then Search Terms. This data can be downloaded. The video below shows how you can get accurate search volume data if running AdWords.

Keep in mind that the quality of data using this method depends on your use of keyword matching options. This practically means that if you want to get exact match search volumes for a certain number of keywords, you have to make sure to have those keywords set as exact match.

If you're not running AdWords, Google gives you a chance to get a good representation of your local search market using the Keyword Planning Tool as described in this post.

Content And Site Architecture

Largely, your content will depend on your business objectives, brand and the results of your keyword research. The time of local brochure type sites has long passed, at least for businesses that are serious about local Internet marketing.

Local websites are no different from corporate websites when it comes to technical aspects of SEO. Performance and crawlability are very important, as well as proper optimization of titles, headings, body text etc.

However, unlike corporate websites, local sites will have more benefit from:

- “localization” of testimonials - it's not only important to get testimonials, but it's crucial to make sure that your visitors know where those testimonials came from.

- “localization” of galleries, as well as “before and after” photos - similar to testimonials, you can leverage social proof the most if your website visitors can see how your services/products helped their neighbours.

- location pages - pages about a specific city/town where you or your client have an office or service area. Before you go on a rampage creating hundreds of these pages, don't forget that they need to add value to the users, and not just be copy/pasted from Wikipedia. The way to add value is to make them completely unique and useful to your visitors. For example, location pages can show the specific directions to one of your offices or store-fronts. You don't have the “big brand luxury” of ranking local pages that have virtually all of their content behind a paid wall.

- local blogging - use your blog to connect with local news organizations, charities and industry associations, as well as local bloggers. In addition, blog about your industry; this way, you will get the best of both worlds.

- adopting structured data - using schema markup, you can increase click-through rates from the SERPs and get a few other SEO benefits. You can use the Schema Creator to save time.

- adopting “mobile” - everyone knows that local search is increasingly mobile. Mobile websites are not a luxury but a necessity Luckily for you or your clients you don't have to invest a lot of resources in developing a mobile site. You can use tools such as dudamobile.com or bmobilized.com to create a fully functional mobile website in hours.

Link Building For Brick And Mortar Businesses

Links are still important. They are still a foundation of high organic search visibility. They still demand your resources.

But a lot has changed - since Penguin. Building links has become a delicate endeavor even for local websites. But there is a way to triumph, all you need to do is change how you view local link building.

See link building as marketing campaigns that have links as a by-product.

What does that mean? It means that your are promoting your business as if Google doesn't exist. Link and citation building overlap to a certain extent. They do so in a way that makes good links great citations, especially if they're structured.

Join Business Associations

BBB.org has an enormus amount of algorithmic trust. It's also an excellent citation. As a bonus - displaying the BBB badge prominently on your website you will likely receive a boost in conversion rates. Similar is true with your local chamber of commerce. Would you join those if Google was not around?

You probably would.

Join Industry Associations

Every industry has associations you or your client can join. You will get similar benefits to ones one can expect from BBB. However, being a member of trade associations will add an additional layer of value to your business in form of education or certifications.

Charity work

Every business should give back. Sometimes you will get a link sometimes you will not but you will always benefit from this type of community involvement.

Industry websites

There are plenty of industry websites and and directories in almost every industry. Sometimes these websites can refer significant traffic to you but they almost always make for a good link and a solid citation.

Organize Events

Events are good for business. If you organize them you should make sure that it's reflected on the web. There are plenty of websites you can submit your event to. Google is not likely to start considering organizing offline events spam any time soon.

Find Local Directories

Every state has a few good ones. It' likely that your town has an online business directory you can join. These types of links can make good citations too. They are usually easy to acquire.

Local Blogs

It pays to a friend of your “local blogosphere”. Try to include local bloggers in your community involvement, offer to contribute content or offer giveaways.

Truly Integrate Link Building Into Your Marketing Operations

Whenever possible, make sure your vendors link to you:

- If you're offering discounts to any organization, make sure it's reflected on their website.

- If you're attending an industry show or an event, give a testimonial and get a link.

- If you get press, remind a report to link to your website.

Review Management

In local search, customer reviews are bigger than life. Consumers trust online reviews as much as personal recommendations while majority (52%) says that positive online reviews make them more likely to choose a local business. Influence reviews have on your local business go well beyond social proof. Good reviews can boost your local search visibility, while bad reviews can destroy your business.

Reviews - The Big Picture

Every organization that strives to get better at what it does should use consumer reviews to improve its business operations. Customer reviews should be treated as one of the most valuable pieces of qualitative data. You should be surveying your customers daily and use their feedback to improve your services, products, customer service etc..

This holds true for corporations, as well as mom and pops shops. It's not complicated to ask your customers about specific aspects of their experience with your business and record their answers. It's not expensive, either.

The benefits of taking reviews seriously are enormous:

- More search visibility;

- Less potential for online reputation management issues;

- Increased Credibility;

What can you do to win at review management?

Since you need to get high rating positive reviews on different websites in a way that doesn't break any guidelines and keeps you out of jail, your best bet would be to use reviews as a customer service survey tools.

This means that you should seek customer feedback systematically in order to improve your or your client's business. You can ask your most ecstatic customers to share their experiences with your services/products on major local search platforms. Remember that you cannot provide any type of incentive for this behavior.

To save time, you can use a tool such as GetfiveStarts.com. This tool will do everything described above.

Think Beyond Organic Search

Internet marketers tend to be blindly focused on organic search. It's understandable - organic traffic is relatively cheap (in most markets) and seemingly unlimited.

It's also a mistake.

Organic search channel is getting increasingly more unstable. And with that, more expensive to acquire. Since you're aware of your customer acquisition cost and have a measurement framework, it's easy to know how affordable traffic from other sources is for your business.

Paid Search Traffic

Paid search advertising works, especially if you did a good job gearing your site for conversion. You shouldn't leave your PPC budget to Google, though. Bing/Yahoo! are a more affordable source of paid traffic with similar conversion rates.

If you're planning to run a local paid campaign, don't forget to:

- target geographically;

- use negative keywords and

- be fanatical about acquisition cost.

You can also read this post by PPC Hero on what you should keep in mind when running local search advertising campaigns. You can also check out this post on Search Engine Land about managing and measuring local PPC campaigns.

Internet Yellow Pages (IYPs) Sites

Sites like YellowPages.com or SuperPages.com don't have the traffic Google or even Bing get, but they do have a significant amount of traffic. They also have traffic that's at the very end of the buying cycle. This is the reason one should be serious about IYPs.

What does that mean?

It means that you should have most of the big IYP listings claimed, verified and optimized to the best of your ability. So use every element of your listing to sell your products/services. In a lot of markets, it's wise to explore advertising opportunities, as well.

If you want to take an extra step, or simply lack the time, you can sign up with a service such as Yext.com and control the major IYP listings from a single dashboard.

Keep in mind, though, that Yext.com doesn't come for free, and you will have to pay a few hundreds dollars for a year of service.

Another avenue to take would be to outsource this process. In this scenario, you will most likely pay a one-time fee for verification and optimization of a predetermined number of listings. However, if you would like to change some of your business information somewhere down the road (such as name and phone number), you will have to go through this process from the beginning.

Social Media

These days, social media means a lot of things to a lot of different people. Local businesses should use social media platforms to connect with customers that love them. Empowering these customers and giving them an incentive to recommend you to their family and friends.

You should automate as much of your social media efforts as possible. You can use tools like HooteSuite or SocialOomph.

Always try to add value in your interactions and never spam your follower base.

Classified Sites

It's amazing how many businesses miss to build their presence on classified sites like Craigslist.org. Even though Craigslist audience the type of audience that is always on the lookout for a great deal, the buying intent is very strong.

If you'd like to get the most out of Craigslist and other classified sites, remember to make your ads count. You need:

- persuasive copy;

- targeted ads;

- special deals;

- etc.

Other sources of non-search traffic you should explore are local newspaper advertising, ads on big industry websites, local blogs and others.

Tracking And Web Analytics

If there's only one thing local businesses should care about, it's tracking. As we established in the beginning of this guide, everyone needs to know how much they can afford to spend in order to acquire a customer.

Proper tracking ensures that you don't make a mistake of spending too much on customer acquisition or spending anything on acquiring a wrong type of customer.

You can use a number of free or low cost web analytics solutions, including Clicky, KissMetrics, Woopra and Google Analytics.

If you're like most people and don't care if Google has access to your data, you can use Google Analytics. Take advantage of custom reporting and advanced segmentation.

In order to make the most out the traffic you get, and to get more of the traffic that is right for your business, you should create custom reports. They will enable you to know how you're doing against your targets.

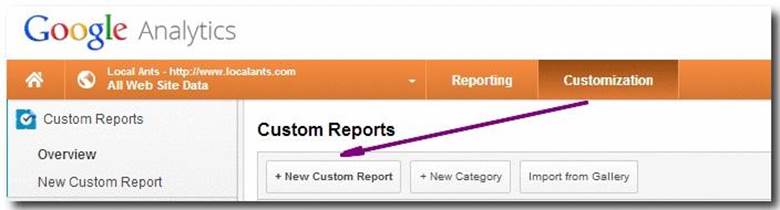

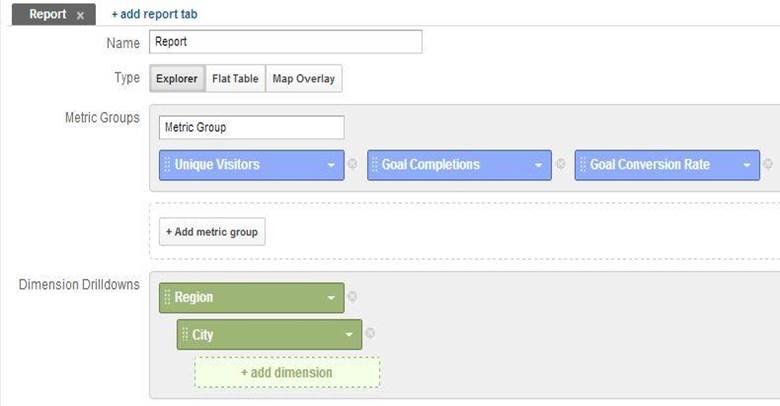

To create a custom report, click the “Customization” tab in Analytics and then click the “New Custom Report” tab.

Pick your metrics first (I recommend a Unique Visitors and Conversion Rates and couple that with the geographic dimension)

Tracking Offline Conversions

This step is crucial for local businesses that want to measure performance. Fortunately, this is not as complicated as it sounds. Depending on the type of your campaign, you can use tracking phone numbers, web-only discount codes as well as campaign-specific URLs.

Avinash Kaushik has written extensively on best ways to track offline conversions. I highly recommend this post.

Tying It All Together

Focus on improving the quality of products you sell and/or services you provide. Remember that every Internet marketing campaign works better if you're able to provide a remarkable experience for your customers.

Build your brand and make your customers fall in love with your business. That would make every aspect of your marketing, especially Internet marketing, work better.

Vedran Tomic is a member of SEOBook and founder of Local Ants LLC, a local internet marketing agency.